Regionalized Edge Computing Platforms: The 2026 Definitive Guide

Regionalized edge computing platforms the centralization of data processing has reached a point of diminishing returns. As industrial automation, autonomous logistics, and immersive real-time applications demand latencies measured in single-digit milliseconds, the round-trip journey to a distant, centralized mega-datacenter has become a structural bottleneck. The industry is now witnessing a massive, distributed “swing of the pendulum” back toward the periphery of the network.

This shift is characterized by the emergence of highly localized, geographically tethered infrastructure. Unlike the “Broad Edge,” which might encompass any device outside a central core, the current movement focuses on the strategic placement of compute resources within specific metropolitan areas or industrial clusters. This is not merely about moving servers closer to users; it is about creating a resilient, “thick” layer of infrastructure that can operate autonomously, process massive localized data streams without overwhelming backhaul circuits, and maintain strict adherence to regional data sovereignty mandates.

The architectural challenge lies in managing this fragmentation without sacrificing the ease of orchestration that the cloud originally provided. Orchestrating a dozen centralized regions is a solved problem; orchestrating ten thousand localized nodes is a frontier. Navigating this transition requires a forensic understanding of network topology, localized power constraints, and the shifting economics of distributed hardware.

This inquiry serves as a definitive reference for infrastructure architects and digital strategists. It moves beyond the ephemeral buzzwords of the telecom industry to examine the deep systemic logic required to build and govern distributed environments.

Regionalized Edge Computing Platforms

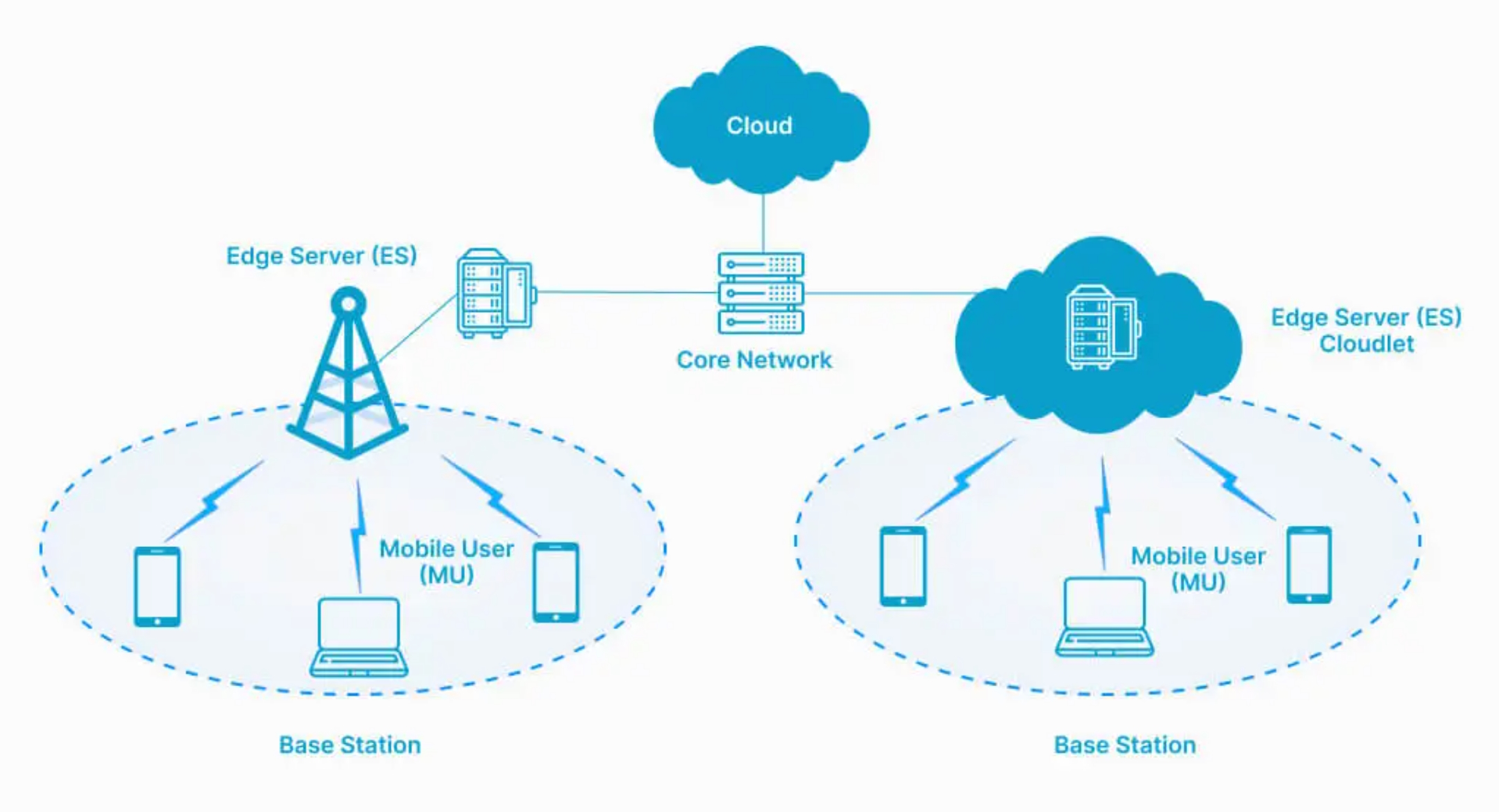

The transition to Regionalized Edge Computing Platforms represents a fundamental departure from the “Global Region” model of the last decade. In a professional editorial context, these platforms are defined by their “Topological Proximity”—the placement of compute and storage assets within a specific network tier where latency is minimized and data transit costs are drastically reduced. They function as a localized “Mesh” that extends the cloud’s capabilities into the physical fabric of a city or a rural industrial zone.

Multi-Perspective Explanation

Regionalized edge computing platforms from the perspective of a network engineer, the regionalized edge is a solution to the “Last Mile” congestion problem. By terminating data at a local exchange point, the pressure on the long-haul fiber backbone is mitigated. From a software architect’s view, it is a “Distributed State” challenge; the goal is to determine which parts of an application’s logic must run at the edge for speed and which should remain in the core for heavy-duty analytics. From a legal and compliance perspective, regionalization is a “Sovereignty Tool,” ensuring that sensitive data—such as medical records or government telemetry—never leaves a defined jurisdictional boundary.

Oversimplification and Risks Regionalized Edge Computing Platforms

A recurring oversimplification is the “Mini-Cloud” fallacy—the idea that an edge node is just a smaller version of a centralized datacenter. In reality, edge nodes face severe constraints in power, physical security, and cooling. They cannot rely on the “Infinite Elasticity” of the hyperscale cloud. The risk of treating the edge as a miniature core is “Operational Fragility,” where a localized power surge or a cooling failure in a telco closet can take down a critical regional service that has no immediate failover. True regionalization requires a “Graceful Degradation” strategy, where the edge node can function in “Island Mode” if the connection to the central core is severed.

Deep Contextual Background: The Decentralization Cycle

The history of computing is a recurring cycle between centralization and distribution. The mainframe era was the ultimate expression of centralization, which was disrupted by the distributed power of the personal computer and the local area network (LAN). The cloud era brought everything back to the center for the sake of efficiency and managed services. We are now entering the fourth phase: “Intelligent Distribution.”

What differentiates this era from previous distributed models is the “Control Plane.” In the 1990s, managing a distributed network was a manual, error-prone task. Today, the “Regionalized Edge” is managed via “Cloud-Native” abstractions. We are using the tools of centralization (Kubernetes, serverless, automated CI/CD) to manage a footprint that is geographically fragmented. This evolution reflects the maturity of software-defined infrastructure, allowing us to treat a thousand dispersed nodes as a single, programmable entity.

Conceptual Frameworks and Mental Models Regionalized Edge Computing Platforms

To analyze distributed infrastructure with professional depth, several mental models are required:

1. The “Gravity of Data” Framework

Data has “Mass.” The larger the dataset, the harder and more expensive it is to move. This framework dictates that “Compute should move to the Data,” not vice versa. In a regionalized model, raw telemetry is processed at the edge, and only the “Metadata” or the “Insights” are sent back to the core, reducing bandwidth costs by orders of magnitude.

2. The “Latency Budget” Model

Every application has a maximum tolerable delay before the user experience or the mechanical process fails. This model maps applications to specific network tiers:

-

Core (100ms+): Long-term storage, deep learning training.

-

Regional (20-40ms): Web content, standard databases.

-

Edge (1-10ms): Autonomous vehicles, robotic haptics, augmented reality.

3. The “Ephemeral vs. Persistent” Logic

The edge is an ideal environment for “Ephemeral Compute”—short-lived tasks that require an immediate response. It is a poor environment for long-term “Persistent” data storage due to the higher cost-per-gigabyte and the risk of physical localized disasters. A robust regional strategy uses the edge for “Action” and the core for “Memory.”

Key Categories: Hardware, Network, and Provider Typologies

Building a regionalized footprint requires selecting the right “Physicality” for the workload.

Realistic Decision Logic

The choice of platform is driven by the “Point of Ingress.” If the data enters the network via a 5G tower, a MEC (Telco Edge) platform is the logical choice. If the data is generated within a private warehouse, an Industrial Edge appliance on a local fiber loop is superior. The decision logic must prioritize “Data Path Shortening”—identifying the shortest physical distance the packet must travel to reach a processor.

Detailed Real-World Scenarios Regionalized Edge Computing Platforms

Scenario 1: The “Smart City” Intersection

A metropolitan area implements real-time traffic management using thousands of high-resolution cameras.

-

The Conflict: Sending 4K video streams from 500 intersections to the cloud would cost $2M/month in bandwidth.

-

The Solution: Regionalized Edge Computing Platforms located in localized neighborhoods process the video to identify license plates and traffic flow.

-

Result: Bandwidth is reduced by 99%, as only the “Traffic Count” (kilobytes) is sent to the central municipal database.

Scenario 2: The “Offline-First” Factory

A remote manufacturing plant in an area with unreliable satellite backhaul.

-

The Failure Mode: A cloud-dependent assembly line stops every time the satellite link drops due to weather.

-

The Fix: Deploying a “Thick Edge” cluster on-site that handles the “Control Loop” for the robots locally.

-

The Resilience: The plant continues to operate at full capacity during a 4-hour outage; data is “Batched” and synced to the core once the link is restored.

Planning, Cost, and Resource Dynamics

The economics of the edge are the inverse of the cloud. In the cloud, compute is cheap and “Movement” is expensive. At the edge, the hardware and “Management” are expensive, but the “Proximity” saves on transit and latency penalties.

Relative Resource Impact (Per MW of Capacity)

Tools, Strategies, and Support Systems

Operating at the edge requires a “Zero-Touch” management philosophy:

-

Kubernetes at the Edge (K3s/MicroK8s): Lightweight versions of orchestration tools designed for resource-constrained nodes.

-

Infrastructure-as-Code (Terraform/Ansible): Ensuring that a configuration change can be pushed to 5,000 regional nodes simultaneously without manual intervention.

-

SD-WAN (Software-Defined Wide Area Network): Dynamically routing traffic to the “Healthiest” edge node, bypassing localized outages.

-

GitOps (ArgoCD/Flux): Using a “Git Repository” as the single source of truth for the state of the distributed network.

-

Out-of-Band Management (OOBM): Specialized hardware that allows engineers to reboot a server in a remote telco closet even if the primary operating system is unresponsive.

-

Distributed Tracing (OpenTelemetry): Visualizing the path of a request as it moves from a device to the edge and back to the core to identify “Latency Spikes.”

-

Object Storage at the Edge (MinIO): Providing a cloud-like storage interface on local hardware for temporary data caching.

-

Security: Secure Access Service Edge (SASE): Integrating security (firewalls, ZTNA) directly into the edge node rather than backhauling traffic to a central security stack.

Risk Landscape and Failure Modes Regionalized Edge Computing Platforms

The “Distributed Risk Matrix” is significantly more complex than centralized models.

-

The “Update Storm”: Attempting to push a 5GB container update to 1,000 nodes at the same time can saturate the very backhaul links the edge was designed to protect. This requires “Staggered Rollouts.”

-

Physical Tampering: Unlike a fortified datacenter, an edge node might be in a street-side cabinet. This necessitates “Disk Encryption” and “Hardware Root of Trust” (TPM) to ensure the hardware hasn’t been compromised.

-

The “Configuration Drift” Trap: If a local technician makes a manual change to one node, it becomes “Unique.” Over time, these unique nodes become impossible to manage at scale.

Governance, Maintenance, and Long-Term Adaptation

Governance in a regionalized world is about “Standardization of the Fragmented.”

-

Review Cycles: A monthly audit of “Node Health” and “Firmware Parity.” Any node that deviates from the “Gold Image” should be automatically re-provisioned.

-

Adjustment Triggers: If a regional node’s utilization exceeds 80% for a sustained period, it signals a “Capacity Expansion” event—either adding more nodes to the local mesh or offloading more tasks to the core.

-

The Layered Checklist:

-

Continuous: Automated “Liveness Probes” to ensure the application is responding.

-

Monthly: Security scan of the “Edge Perimeter” for newly discovered vulnerabilities.

-

Annually: Physical inspection of remote sites for environmental degradation (dust, moisture, cable wear).

-

Measurement, Tracking, and Evaluation

How do you measure success in a regionalized environment?

-

Leading Indicators: “Tail Latency” (P99)—the response time for the slowest 1% of users. This is a better measure of edge health than “Average Latency.”

-

Lagging Indicators: “Egress Cost Reduction” and “System Availability” during core outages.

-

Quantitative Signals: “Computation Density”—how many requests are processed locally vs. how many are offloaded to the cloud.

-

Documentation Examples:

-

The “Latency Heatmap”: A visualization showing response times across different geographic regions.

-

The “Edge-to-Cloud Ratio”: A financial report tracking the shift in spending from bandwidth to local compute.

-

Common Misconceptions and Industry Myths

-

Myth: “Edge computing will replace the cloud.”

-

Reality: The edge is an extension of the cloud. They are complementary; the cloud is for “Scale,” and the edge is for “Speed.”

-

-

Myth: “5G is required for edge computing.”

-

Reality: While 5G enables mobile edge, most industrial edge platforms run on existing fiber or private LTE networks.

-

-

Myth: “The edge is less secure than the cloud.”

-

Reality: The edge reduces the “Attack Surface” by keeping sensitive data local. While physical security is a challenge, the logical security can be just as robust as the core.

-

-

Myth: “Edge computing is only for autonomous cars.”

-

Reality: The largest current deployments are in retail (inventory), manufacturing (QA), and content delivery (streaming).

-

Ethical and Practical Considerations Regionalized Edge Computing Platforms

As we distribute compute into the physical world, we must consider the “Socio-Technical” impact. Regionalization can help bridge the “Digital Divide” by bringing high-performance services to rural areas, but it also increases the “Carbon Footprint” per unit of compute due to the less efficient cooling systems in small-scale nodes. A professional architectural perspective suggests that the most ethical edge platforms are those that prioritize “Local Autonomy”—ensuring that a community’s critical digital infrastructure does not depend on a single, distant corporate entity that could be disconnected at any time.

Conclusion

The era of the “Undifferentiated Cloud” is ending. The future belongs to Regionalized Edge Computing Platforms that understand the nuance of geography and the physics of the network. In a world where every millisecond counts, the most successful enterprises will be those that learn to master the geometry of their data.